Motion capture from video files has improved with time with some recent new entrants on the field, so I thought I would give a quick try again to see whether they were good enough quality for my goals. I am creating an online animated cartoon as a hobby project, although I admit I spend much more time on the technology than the episodes!

TL;DR: The tools are much better, but not sure if good enough for my liking yet (although I might not have adjustments not calibrated right).

DeepMotion

I have used DeepMotion a few times with degrees of success. It does have some features I really like:

- It can do face and hand capture at the same time as body capture

- You can upload your character and it will take physics into account so hands do not go inside the body etc – this is really useful as my models do not have exact human proportions (e.g. head is bigger than real life)

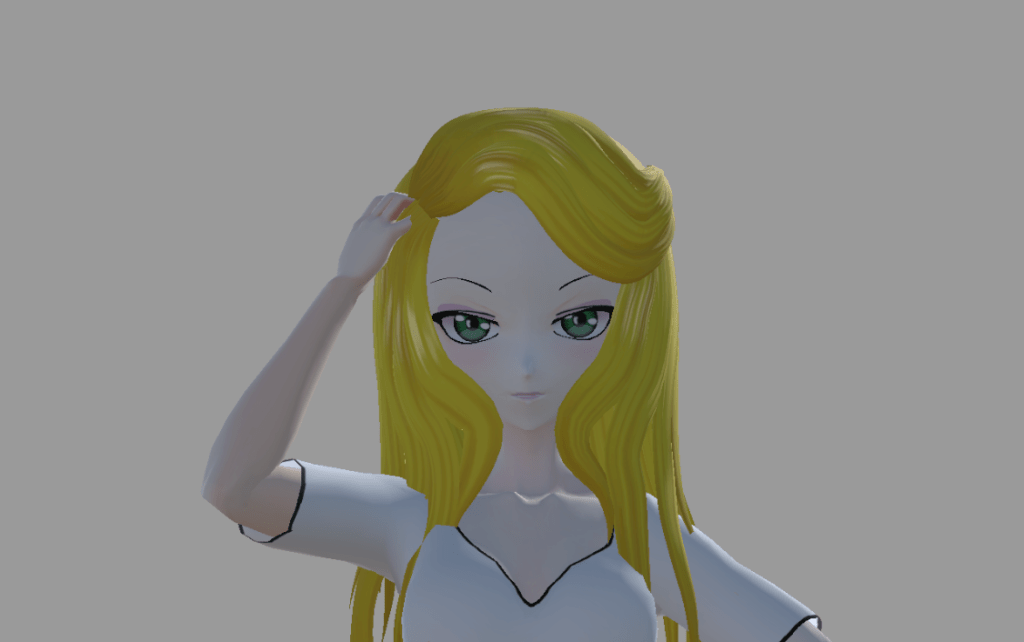

Example with physics on – the hand scratches the outside of the head correctly.

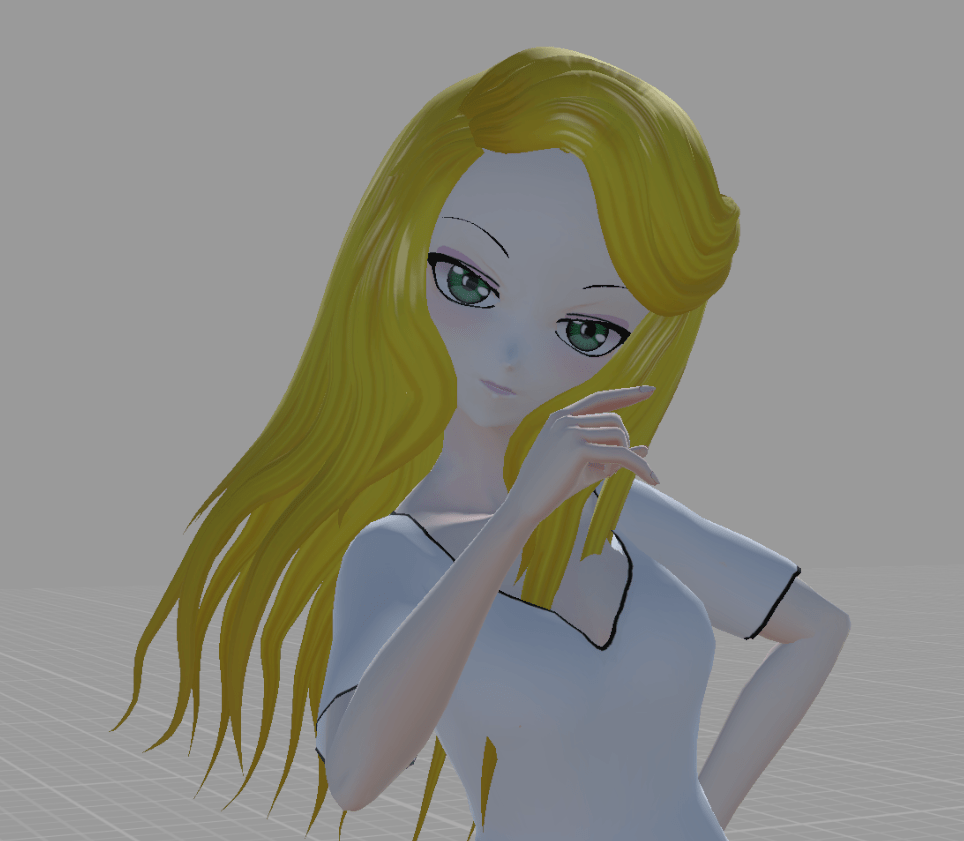

Without physics on, the hand goes inside the head because the proportions of the model is different to the original person in the video. (Go on, say it, you know you want to, maybe she should not have such a big head!)

The beta hand tracking is also pretty nice. I was actually pointing directly at the camera for the shot below, but getting finger movements in, even if not perfect, does add a lot to the depth of the animation.

My problems with it

- While good, capturing from a video still is not “great” quality

- Fingers (in beta) are pretty good, but not precise

- The feet I still have problems with either moving or overlapping – although I sometimes wonder if it is a Unity setting I have wrong

- I want to do lots of clips – you can really burn through the credits if you are not careful (financial consideration, not technical)

But it was much, much better than last time I tried. I was not that unhappy with the results – more a matter I would probably want to edit them to fix mistakes it was making.

Note: I did not set up my character for facial expression tracking, so I did not try that out.

Rokoko Studio

Rokoko have introduced a new webcam / video file based body capture addition, so I thought I would give it a go.

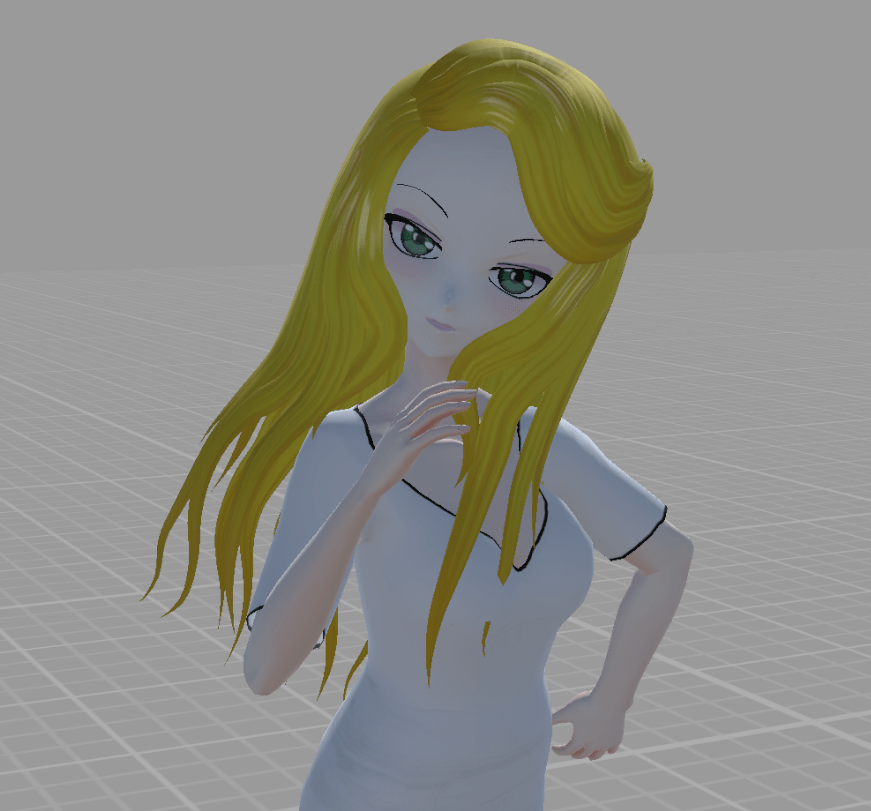

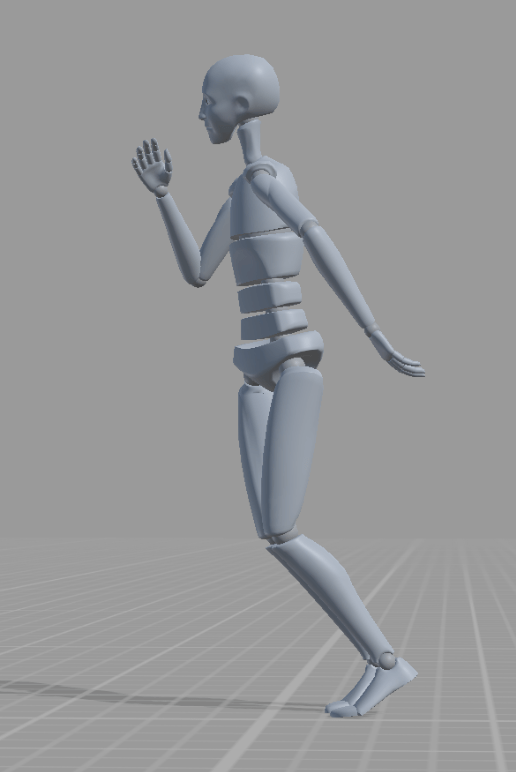

It does not include finger tracking from video, but the rest of the poses are pretty good.

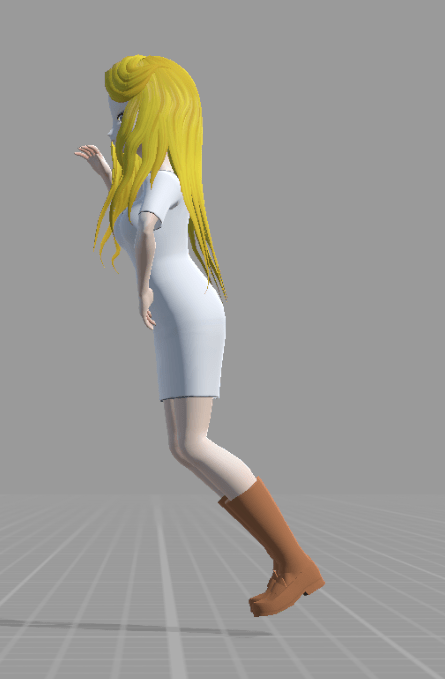

The biggest problem I had in my quick test was even though I thought I put foot posing on, my feet after converting to humanoid are floating off the ground.

This does not happen in the original character, although it is leaning forward a bit. (I had the camera on a slight angle, but redoing with camera perfectly upright still had character leaning forwards. I have had this in DeepMotion as well.)

Move.ai

I applied for the beta, but it recommends multiple cameras going at once – so more work to set up and calibrate etc. It might be out of my range for what I am willing to do on my little hobby project. I am after reasonable quality with good work velocity without having to set up dedicated areas of the house for recording.

Cascadeur

Not video capture, but Cascadeur has also been making progress. You pose using your mouse on the computer, but they have been adding more physics modeling and AI support so you can create fewer keyframes with better quality results. Looks pretty good really. If I was going to go for serious animation, I would seriously consider using this tool. I normally use existing animation clips and add a bit of movement to them – lower quality, but faster to create.

Conclusions

I am using a combination of existing animation recordings plus either VR controllers or webcam VTuber software (VSeeFace with LeapMotion camera) to move upper body and hands. This so far has given me the most robust solution (needs the least amount of fiddling), but the workflow of recording, seeing the result, and trying again is still not great. The biggest pain for me using VR is the need to put the headset on then take it off per recording while I am editing.

So even while I am not planning on moving to the video approaches above, I am not saying they are much worse from what I am actually doing to create my animations. This is why I am hanging out for the Sony Mocopi devices. I don’t expect them to be great quality either, but if they can speed up my workflow (no need to put VR headset on/off per retake), then they may be an appealing solution.

But my summary is these technologies are definitely improving. For myself, the challenge is finding an overall workflow where I can spend more time creating content without it being too painful and repetitive, but flexible enough so I can do more advanced shots and effects in Unity.