In this post I collecting notes related to experiments with MIDI control of Character Animator in case useful to anyone else. I am still working on this, learning how it all works. Expert opinions welcome!

Please note I plan to come back and edit this page as I learn more.

MIDI

MIDI is a 30 year old (?!) protocol for musical instruments (like synthesizers) to generate computer signals. Lots of keyboards support MIDI, as well as synthesizers, drum machines and so on. There are events such as NoteOn and NoteOff, plus “Control” events such as adjusting a volume slider or pitch bend. (There is a lot more around more advanced music control, timing, etc – but I don’t think these are relevant to Character Animator. Its just the note events and control events that matter.)

Going a bit further (with gross over simplifications), Windows and Mac allow MIDI devices to be registered which are “sources” of MIDI events. For example, have a low quality MIDI keyboard, a M-Audio KeyStudio MIDI keyboard. It has piano keys, a pitch bender, volume control. It cannot make any sounds – it just generates MIDI events to feed into a computer.

![416CY5ligUL[1]](https://extra-ordinary.tv/wp-content/uploads/2018/09/416cy5ligul1-e1538170034711.jpg?w=748)

Programs listening for MIDI events (such as synthesizer software) can ask the operating system what devices are available. It can then choose which devices to listen to. (It might show the user what devices exist, allowing them to select one or more to listen to.)

Other programs can generate MIDI events, sending the output to a MIDI device. For example, most [musical] keyboards normally can generate sounds (with a bank of different instrument sounds built in). Sending MIDI events to those devices can be used to play the notes. This allows interesting combinations, such as having a MIDI drum synthesizer controlled by a piano keyboard – allowing combining different sounds all triggered from the one keyboard.

Other Physical MIDI Devices

There are a range of MIDI compatible devices on the market these days, physical keyboards being common. But there are also other interesting devices such as “Hot Hand Wireless“, a ring you can put on your finger and wave around to generate MIDI control events. I may buy one to have a play, but have not done so yet. The screen shots look like it can capture X/Y/Z coordinates of a your hand the finger is on, which could be rather cool for controlling the X and Y coordinates of a puppet hand. (I think the intended market is DJ’s, where hand gestures can play notes and change effects.)

On Windows, when I plugged in my KeyStudio MIDI Keyboard, it installed a Windows device driver automatically. These device drivers are what makes the external physical device visible to applications on Windows (and I assume something similar is true for Mac’s as well).

One of the monthly Adobe “Tips and Tricks” episodes showed some other cool devices that could be clicked together in different configurations to generate MIDI events – buttons, sliders, and so forth.

Character Animator Bindings

So what has this all got to do with Character Animator? To be clear, it has nothing to do with music or sounds. What Character Animator can do is use the same MIDI protocol to control puppets.

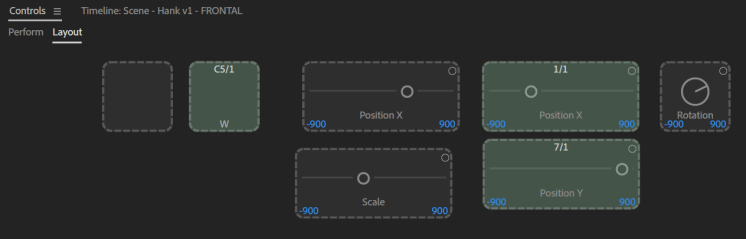

Character Animator in the “Controls” panel can be used to associate MIDI events with different controls. For example, a trigger can have a MIDI note event (like C5 – the “C” note in the 5th octave) associated with it, or a knob can have a “Control” associated with it to receive the numeric value of a MIDI control.

In the above screen shot, note “C5” from MIDI device 1 is associated with the same trigger as the “W” key. The Position X and Y sliders are bound to tracks (??) 1 and 7 of device 1 (these were the pitch bend and volume knobs on my KeyStudio MIDI keyboard).

So, using my MIDI keyboard I can now have >50 trigger keys, and two sliders. This could be useful for a professional animator – you could put stickers on all the keys for example, and free up your computer keyboard for other things.

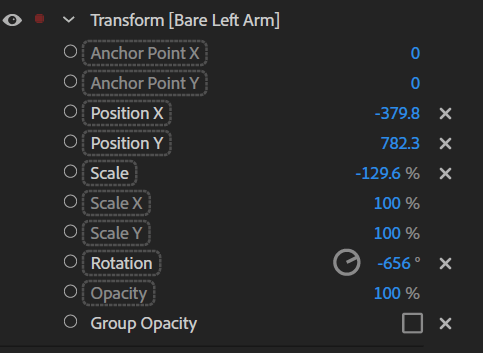

The end result is I can control the hand position of a puppet using my keyboard sliders. Based on advice from the forums, I dropped a Transformation behavior on the hand of a puppet, dragged the behavior properties over to the control panel (causing the above to appear), then used the MIDI keyboard to generate a MIDI event. That caused Character Animator to bind that event to that control panel widget.

Note that any of the behaviors with the box around them can be dragged to the control panel and have MIDI events assigned to them. In the following screenshot Position X, Position Y, Scale, and Rotation are white indicating they are in the control panel already.

Cute, but not necessarily that useful. Using a pitch modulation wheel and volume knob to control X/Y positions of a single hand is not a very pleasant experience. But something like the “XY MIDIpad” device might be more useful.

Virtual MIDI Devices

This is where life gets funky. In my case, I have a HTC Vive on another computer that the kids have taken over. Its a VR headset with hand controllers. You can also get additional tracking devices. The HTC Vive makes a SDK available so you can capture the positional events of various trackers yourself.

Some VTubers (Virtual YouTubers) use the HTC Vive to control their 3D animated characters on their YouTube channels – a big trend in anime-loving Japan in particular. You can even get a range “MoCap” suits and rigs that capture your body movements. A range of HTC Vive straps to attach the additional HTC Vive trackers to arms, knees, waste etc are available on Amazon and other places.

I was curious about how to feed devices such as these into Character Animator which does not provide an API. The answer appears to be to use the MIDI support. My first attempt was to get some code to generate MIDI events and see if Character Animator spotted them. The first attempt was a failure. Finding demo code was easy, but the code connected to a local MIDI device and sent it MIDI events. Character Animator does not see such events. It only sees MIDI events from MIDI devices.

So the trick is to register a MIDI device with the operating system for Character Animator to detect. Character Animator appears to watch all of the MIDI devices attached to the computer. Mac supports this natively in the OS – there is an API to register a “virtual” MIDI device. Unfortunately on Windows its a bit harder.

Windows Virtual MIDI Devices

One Windows, the solution appears to be to that Virtual MIDI Devices can only be created from a device driver. A device driver is code typically loaded when a new piece of hardware is connected to a computer. The device driver is responsible to convert the external signals coming down wires into something the operating system understands.

The good news is there are some free tools to help. Here are some tools I have found that look useful:

- loopMIDI: creates a local loopback device. You can send it MIDI events as a destination (e.g. pretending it will play them), and it spits the events straight back out as if it had created them. This makes them visible to Character Animator.

- rtpMIDI: can be used to connect two computers, sending MIDI events over an ethernet cable. That means I can plug my KeyStudio keyboard into one computer, and have the MIDI events appear on another computer. Further, the network protocol is publicly documented, so I should be able to generate network MIDI events and have rtpMIDI pick them up.

HTC Vive Integration

There is an OpenVR SDK which can be used to capture positional data data. This returns X/Y/Z positional data, rotational data, button clicks, touchpad events, and more. The HTC Vive controllers are pretty nice devices. The other HTC Vive tracking devices don’t have the buttons or touch pads.

I have some initial code written in C# for Windows that converts the 3D positional data into 2D X/Y positions suitable for feeding into Character Animator. See https://github.com/alankent/htc-vive-midi. It sends MIDI events to loopMIDI (if I run Character Animator on the local machine) or rptMIDI (if I run Character Animator on a second computer).

The end result is buttons on the handsets for for common triggers, plus positional data for my two hands. Additional trackers could later be added for draggers for arms, legs, waist etc. See the README.md file in the GitHub project for more details on how to build and run the code.

The following is a first video. It attaches X/Y positions to Transform behaviors added to the hands. (I could add Z to the scale I guess.) Note that the hands do not rotate. I think that would be a logical next step. I was wondering about using the HTC Vive controller touch pad to control eye positions rather than webcam, plus a Vive tracker maybe for a chest dragger to move the body around better. But I might wait until Adobe Character Animator v2.0 comes out as some things are changing in this area.

I was also wondering about turning one of the two touch pads into a matrix of 9 additional buttons, leaving trigger buttons on each controller for hand positions. The idea is to maximize the potential of the two controllers for say live streaming where you dont’ want to put down the Vive controllers to hit a key on the keyboard.

Known Limitations

There does not seem to be MIDI controls in Character Animator for controlling the head position. That seems limited to the webcam. Facial expresions are also driven by the webcam and audio. So I suspect I won’t be able to use the HTC Vive headset – only the hand controllers. That might not be such a terrible thing however, as I would have to get the Character Animator screen sent to the HTC Vive headset for display to see what I was doing – which starts to sound a bit more effort that I am willing to go to at this stage.

Very interesting. I think the biggest drawback of character animator is that the camera only takes input of the head- if you could do arms and hands it would be just a million times more friendly.

Have you seen these? https://www.amazon.com/IK-Multimedia-wearable-motion-control/dp/B00I0JJBOO

LikeLike

Yes, arms and body would be great. You would have to be a bit further back from camera maybe, but still useful.

I was doing it with HTC vive controllers, but it was cumbersome picking them up and down. The rings idea is cute. Can still grab mouse quickly or use keyboard triggers. But the reviews were pretty bad on that device. There are others that use bluetooth – seems more reliable. One I looked at only had one dimension (x not y). The HTC vive trackers are nice in you get x, y, and rotation.

LikeLike

Great Read! I am more so looking into trying similar experiments in controlling animation. I appreciate you leaving these notes here, for they are great references. I want to see if midi can control adobe animate now!

LikeLike