My personal goal is to create an semi-animated comic. In particular, create a “web story” per episode of a series. I do not plan to create fully animated episodes, but instead create pages with short animation clips – more like a comic book where the pictures move a bit (the eyes widen, a punch in thrown, etc). Also no sound, at least initially. So no speech, music, or sound effects – speech bubbles or other visual clues will be used instead. The purpose is to reduce the effort required to create an episode.

This project being a hobby and I not being an artist, I am trying to leave character creation to VRoid Studio and scene creation to Unity and purchases from its asset store as much as possible. I am learning Unity from scratch for this project, trying to work out how to fit all the pieces together to create a web story.

Web Stories

A web story is a series of pages you can tap through, where each page can have static (e.g. an image) or dynamic (e.g. a video or animated transitions) content. It is a vertical format intended primarily for viewing on a mobile phone, although full page desktop stories are also supported. The following web story from CNN on protecting the Antarctic shows a mix of still images and videos, overlaid with caption text.

Anime from 3D Animation Packages

I came across some real life projects that are using 3D animation in the anime style that I thought I would share.

The first is The Animation of Guilty Gear Xrd & Dragon Ball FighterZ, a great exploratory video on how one of the leading companies is generating anime quality results from 3D animation. If I was to summarize the video, it’s HARD to do well!!!

What this video did reinforce to me is that it is fine (even desirable) to create a comic that has a particular feel that is not anime. The video above made the point that many of the 3D attempts that tried to feel like anime look a bit creepy when they fell short. Some animations look better because they purposely do NOT attempt to look like more traditional anime. That made me feel a bit better. If the professional studios struggle with anime quality computed generated images, it is fine if I struggle too!

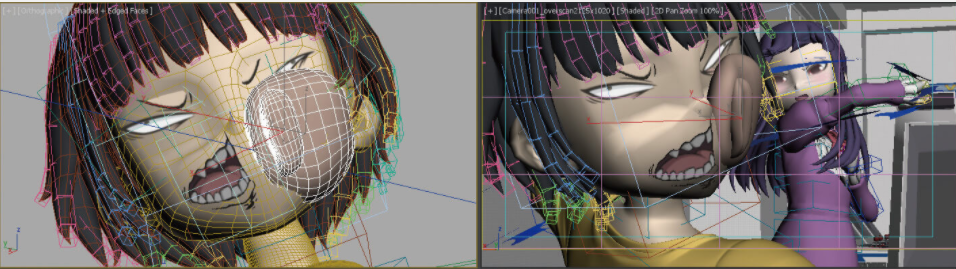

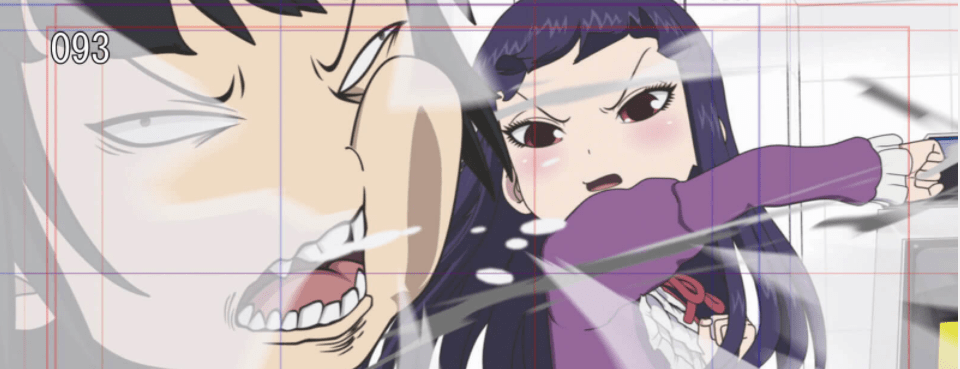

The next article was from a tweet, a behind the scenes look at the making of “hi-score girl”.

They use 3D generation with a fairly sophisticated pipeline behind the scenes.

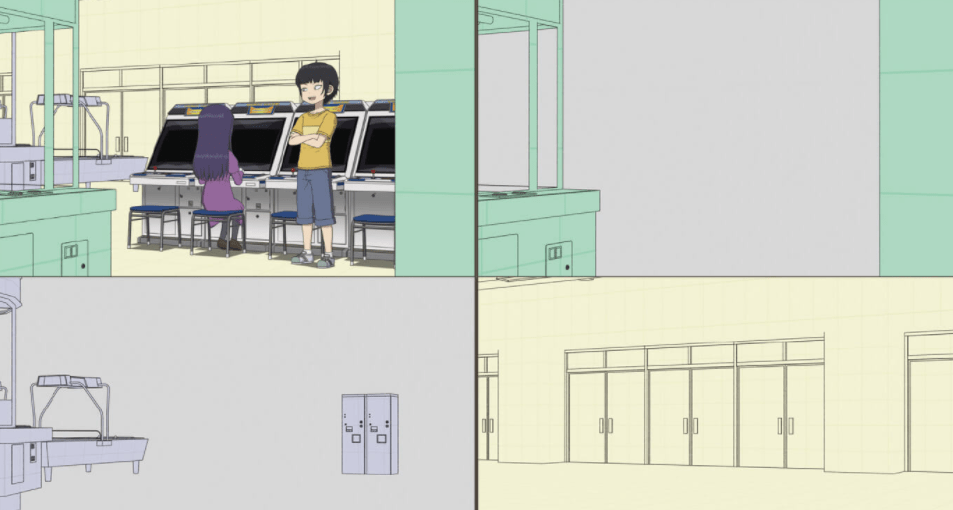

The full article (in Japanese, but Google Translate is your friend!) I found particularly interesting. They do not render the final output from a single 3D scene. Instead they generate different output files which they can then load into Adobe After Effects and make manual adjustments to. The backgrounds, shadows, and more were all generated separately.

Read the full article here: https://cgworld.jp/feature/201812-animecg-book-3.html

This is approach is interesting to me. By generating multiple independent layers, it allowed the creators to touch up the different layers using the most appropriate tool for the job. They did not have to get a 3D rendering package to generate the final image (including all effects). Sometimes they touch up the frames one by one in a sequence because it gives more artistic control. The visual end result is what mattered, not which tool they used.

(I remember a friend many years ago who wrote some software for a movie special effect say “the movie makers did not care at all how hacky the code was – if the end result looked good, the project was done”. The code was never used again.)

Because After Effects can import multiple files and layer them, it also means AE effects can be used when creating the final video for a page.

After Effects Scripting

The following image (again from the linked article above) shows how a final scene was composed from several layers. These layers could be positioned, blurred, shaded etc to “look good”. It was not necessary to get a 3D rendering package get the lighting just right to highlight the various parts of a scene.

This led me on to read up more on Adobe After Effects scripting. To date I have been doing various scripting in Unity (e.g. to make a character ride a scooter, moving the arms as the character steered). I had imagined creating the final video clips and even web story output from a script in Unity. The ability to do scripting in After Effects (using JavaScript) opened up another option. Get Unity to create a After Effects project instead, allowing a final manual tuning pass before creating the final web story.

For example, currently I have not worked out how to get Unity to create a video file of good quality. The recorder package I found creates high quality PNG files per frame, but in video mode the quality was much lower. This is where After Effects could help. It can be given a set of individual images, one per frame, and convert them into a MP4 video file. With the scripting API, it might be worthwhile going further and allowing, for example, the speech bubbles to be added inside After Effects, then create the final web story from the assembled assets. (Sound effects could be added in this way as well.)

So my new plan is to create an After Effects project from Unity, not create a web story from Unity directly.

Here are some useful resources I have found so far (ExtendScript is Adobe’s version of JavaScript which can be used to control After Effects and other Adobe tools).

- After Effects scripting: https://buildmedia.readthedocs.org/media/pdf/after-effects-scripting-guide/latest/after-effects-scripting-guide.pdf

- Illustrator also supports scripting, meaning it may be possible to automate the creation of speech bubbles, reducing manual effort: https://www.adobe.com/content/dam/acom/en/devnet/illustrator/pdf/AI_ScriptGd_2017.pdf.

- Creating a Composition in ExtendScript: A YouTube video showing how to create a composition via Scripting (the video is a little slow if you know programming, but gets there in the end – I found it helpful to make the documentation concrete).

- After Effects Scripting Tutorial: Render Queue: Another YouTube video, this time showing how to add a composition to the render queue and render it (generating a MP4 file).

- http://docs.aenhancers.com/introduction/overview/#running-scripts-from-the-command-line-a-batch-file-or-an-applescript-script

- https://community.adobe.com/t5/after-effects/run-jsx-file-from-command-line-windows/td-p/10104026

- https://community.adobe.com/t5/adobe-media-encoder/adobe-media-encoder-scripting-run-from-commandline/td-p/10333412?page=1

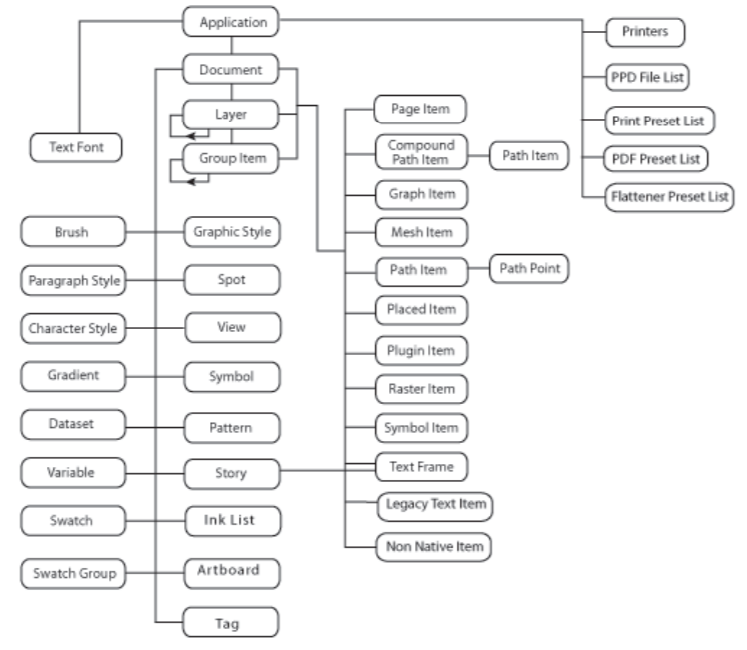

The following is the Illustrator object model exposed to Scripts, which is fairly rich as can be seen. I would probably create a set of template files for different bubble types (speech, thought, shouting, etc), then copy and modify the files with scripts to inject the text for a speech bubble, allowing manual fine tuning of the end result inside Illustrator.

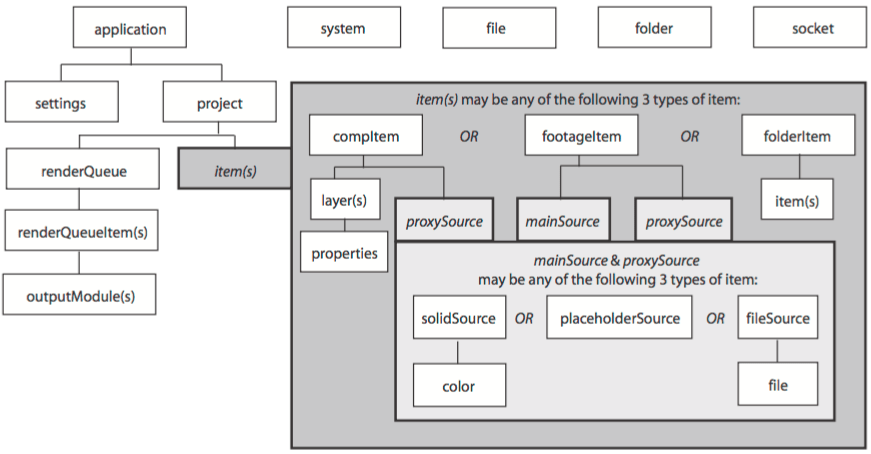

And for After Effects (https://ae-scripting.docsforadobe.dev/introduction/objectmodel/):

Conclusions

I have not attempted to write a full web story generation script yet to know how feasible it is, but I do find it appealing to create an After Effects project from Unity output, allowing speech bubble creation in Illustrator and placement in After Effects. It also opens up the possibility of adding sound later in After Effects. From my first experiments I have not managed to find the “ExtendScript Tool Kit” for the latest version of Adobe Creative Cloud (CC), but I did find Visual Studio extensions to do this and they seemed to work acceptably.

I also liked accepting that I do not have to create good quality anime. In fact, it may be a mistake as my own efforts are not likely to reach an acceptable level of quality. It is fine, even good, to have a distinctive feel. It is more important for the episodes to be self-consistent. It is also important to think about the end result. Is the goal to create an animation that feels like playing a game in Unity? It is important to have an artistic vision, then do whatever is required to achieve that vision. It is using the available tools to create depth in the characters that makes an episode more appealing. For example, getting “roasted” in my first 2D animation attempts Friendship (Extra Ordinary, Episode 1), 113 seconds in.

But it was also interesting to see how different professional organizations have been moving to leverage 3D animation packages to create shows. For TV series keeping costs low is important, so automation helps, but it must be visually interesting as well. It is an interesting time of change.