One of my personal challenges is how to create 3D animated content with relatively low effort and decent quality. I have been building up a library of animation clips in Unity (a game creation platform) which I stitch into a sequence to make up a scene, but it is proving time consuming. Another approach is to use performance-based recordings, where you perform the action live and record it.

This post lists a number of technologies in the area I have come across (I am sure there are more!). Feel free to add other suggested technologies to the comments below. Please also note this is a list, not a review. I have not used many of these tools so cannot comment on how well they work.

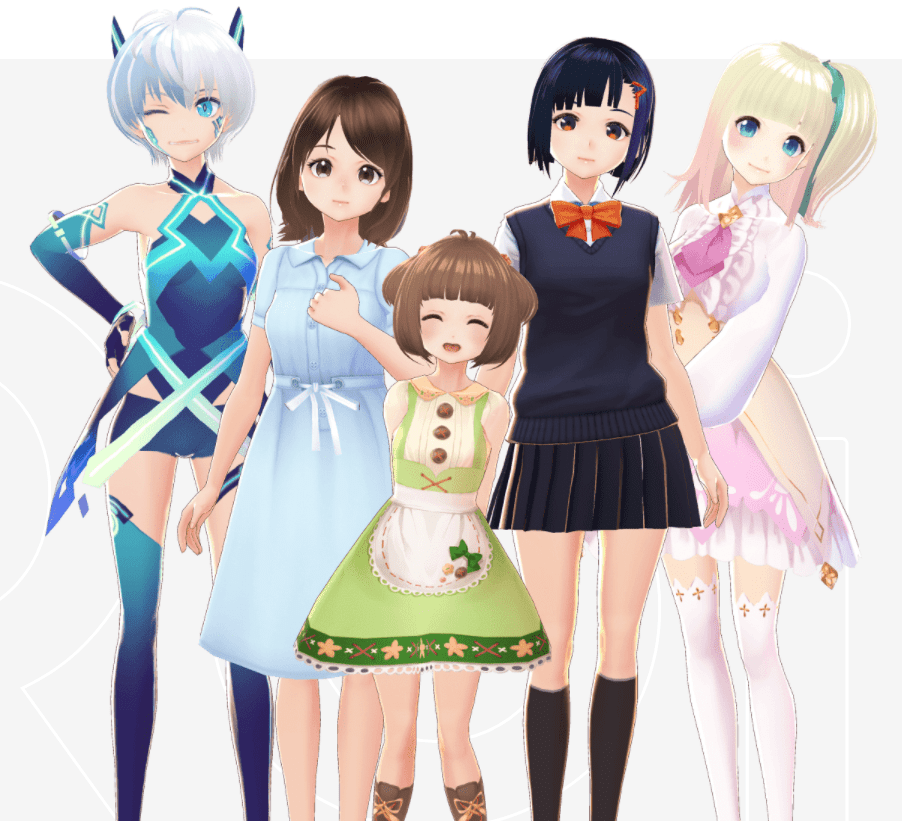

Creating 3D Humanoid Avatar Models

There are lots of tools around, but I like free so I will put a quick mention in for Blender and VRoid Studio for creating characters. Blender can also create animation clips. VRoid Studio is certainly faster, Blender is more of a tool for professionals.

Some terms that you may come across:

- VRM is a file format for sharing VR avatars used by a few programs like VRoid Studio, VR Chat, and more. It standardizes the avatar file format making them easier to port between tools.

- A “blend shape” distorts a mesh, for example stretching the mouth wider and upwards to form a smile. VRoid Studio bakes a few expressions (joy, sorrow, anger, …) into VRM avatars. AR Kit from iOS instead of full facial expressions, watches for movements of different parts of the face then applies combinations of 52 blendshapes (eyebrow movements, eye movements, mouth movements, etc) to create different facial expressions.

- Humanoid bone movements controls body and finger poses. Head twists, moving arms, standing in different positions, etc is controlled by bones.

- Not mentioned here is texture blends, like blushing. The skin changes color rather than changing shape.

The Types of Data Typically Captured

The following are key types of data that are captured by various tools.

- Body (arm, leg, head, etc) poses

- Facial expressions

- Hand poses and finger movements

- Mouth positions or audio processing for lip sync

Capture Hardware

The following are example hardware that can be used to capture 3D movements.

- Video file – some tools do processing offline, so you capture a video file first then load the video file for processing

- Leap Motion Capture / Ultraleap – a camera especially designed to capture hand positions

- Mocap (motion capture) body suits – contains various sensors to capture how your body moves – these are what profession movie studios use (e.g. rokoko.com). There are also special gloves you can often purchase separately to track individual finger positions.

- VR equipment (e.g. Oculus or HTC Vive) – the headset, hand controllers, plus additional trackers you can attach to your waist and feet. This is in effect a poor man’s mocap suit.

- Webcam or external USB camera – several tools analyze the video from a webcam to spot key points on the face to track eye movements and facial expressions, some more modern experiences are doing full body position capture backed by AI techniques

Capture Software and Libraries

Adobe Character Animator is a 2D cartoon performance recording approach. You can use a mouse or touch screen to drag hands and feet around on the screen, use a webcam for head movements and facial expressions, as well as audio processing for lip sync calculations. I mention it as an example of video capture controlling part of a puppet (and because I have used the program a lot).

Reallusion has Cartoon Creator 4 which is a similar product to Character Animator, but has some cool 360 head view support and you can use pre-recorded animation clips to control puppets instead of using live recordings. They also recently launched a 3D animation clip library service (ActorCore) with the upcoming 2D cartoon animator release able to use 3D animation clips!

Mixamo (also from Adobe) is a library of 3D animation clips they make available. MotionLibrary.com is another similar site. Rokoko also has a library of clips.

Web Cam VTuber Software

Vear is an iPhone app that allows you to be a VTuber only using your phone. Can load a VRM file (like from VRoid Studio) then use the AR Kit facial data from iOS to control your character’s facial expressions. A quick and easy way to record your VR character, but with limited capabilities (as would be expected on a phone).

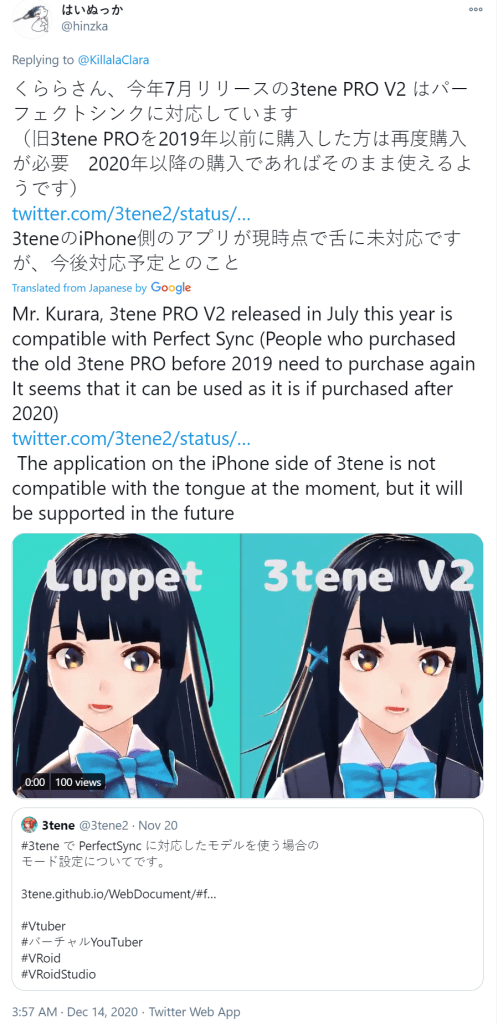

Luppet is software that uses a combination of the Leap Motion Controller for hand movements and a webcam for facial expressions and movements, for 3D avatars (such as created by VRoid Studio). The combination is output with a greenscreen background that can be replaced using chromakeying software. This is a pretty popular tool for VTubers.

“VSeeFace is a free, highly configurable face and hand tracking VRM avatar puppeteering program for virtual youtubers with a focus on robust tracking and high image quality. VSeeFace offers functionality similar to Luppet, 3tene, Wakaru and similar programs. VSeeFace runs on Windows 8 and above (64 bit only). VSeeFace can send, receive and combine tracking data using the VMC protocol, which also allows iPhone perfect sync support through Waidayo like this.” (Their intro was perfect, so I just copied it here).

Waidayo is another similar tool for facial motion capture, but it supports the VMC protocol from an iPhone capturing facial expressions, sending the blendshape events over a network connection to a computer (Windows or Mac).

“3Tene Free V2″ supports face tracking and lipsync, leap motion camera for finger tracking, so similar to Luppet.

Wakaru, free VTuber software that supports eye capture, mouth shape capture, head pose capture, facial controls, upper body movements.

VMagicMirror, another Windows based web cam, facial expressions, projection onto a greenscreen. Perfect Sync is the part of the solution that does facial expressions.

iFacialMocap, captures facial data using the ARKit library from Apple on iOS and sends it over the network to a connector in an application like Unity, Blender, or Maya. (Similar to Perfect Sync.)

HANA_Tool, can be used in Unity to add additional facial blend shapes (used for expressions) to a VRoid Studio character (for example). Tools like iFacialMocap need more blendshapes than are provided by VRoid Studio for good quality results.

UMotion Clip Editor by Soxware I have found very valuable for editing animation clips inside Unity.

VR Based VTuber Tools

Virtual Motion Capture (VMC) uses Virtual Reality headsets and controllers to control a character. You can use this software to be in a VR game and show your character responding to the movements as if your character is in the game. It has a few different features allowing it to be used for different purposes.

Easy Virtual Motion Capture for Unity (EVMC4U) is a Unity extension that captures VMC data over a network connection so you can control a character inside Unity.

Easy Motion Recorder, “A script that records and plays back the motion of a Humanoid character that has been motion-captured such as VRIK on the Unity editor.” It saves “two facial expressions, BlendShape and Humanoid bone animation.”

Full Body Motion Capture from Video

This is getting into more advanced leading edge research, but it is interesting to see where things are going.

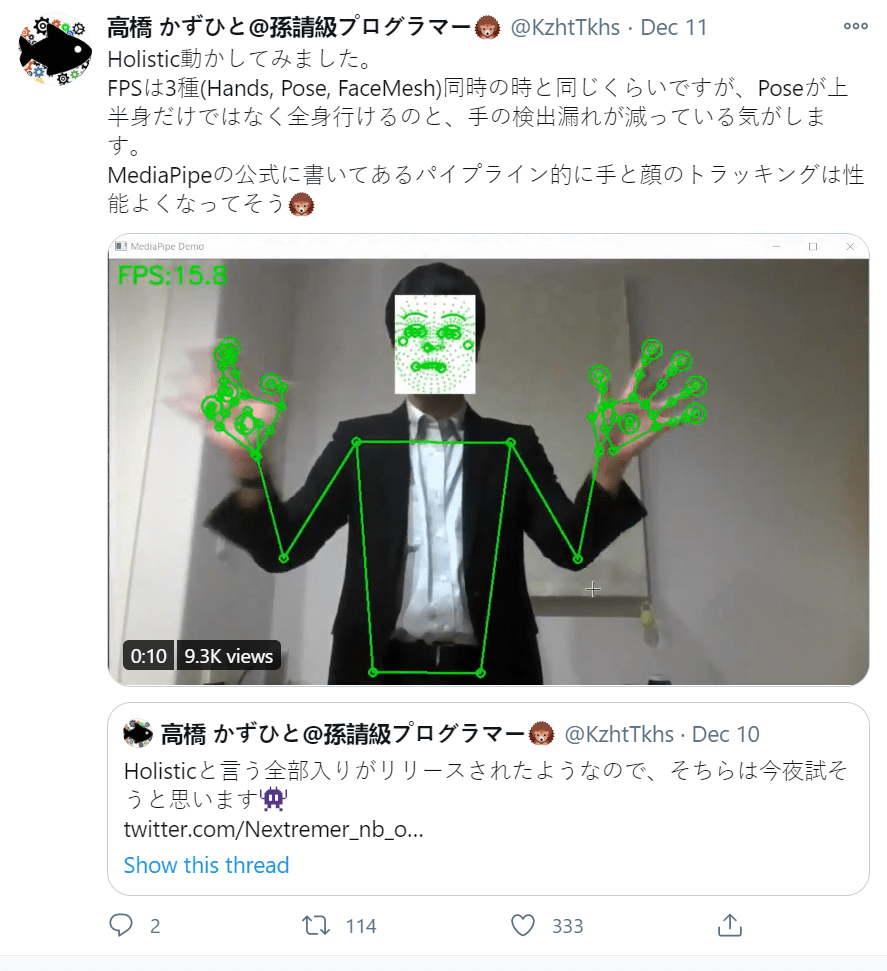

MediaPipe by Google is a machine learning library for face detection, hand movement detection, pose detection, … lots of things. But if you are a mere mortal, it is a library of code (not a complete application) and needs to be included in an easier to use tool.

Deep Motion – create an animation clip from a video file. Uses machine learning to turn the 2D video file into 3D human character movements.

VIBE by Cedro3 – an interesting (but short) blog post on how to convert video into animation sequences.

ThreeDPoseTracker – another project using video camera capture to generate animation sequences.

A Selection of Tweets

- A great list of tools from the creator of HANA_Tool, https://twitter.com/hinzka/status/1338555604005134337?s=21

- https://twitter.com/emiliana_vt/status/1338545893033832451?s=21

To get tracking data into Unity, the easiest way probably it use tracking programs with VMC protocol support (Virtual Motion Capture, VSeeFace, Waidayo…) and EVMC4U on the Unity side. You can find some information here: https://protocol.vmc.info/Reference

- A great list of tools from the creator of HANA_Tool, https://twitter.com/hinzka/status/1338555604005134337?s=21

- https://twitter.com/emiliana_vt/status/1338545893033832451?s=21

To get tracking data into Unity, the easiest way probably it use tracking programs with VMC protocol support (Virtual Motion Capture, VSeeFace, Waidayo…) and EVMC4U on the Unity side. You can find some information here: https://protocol.vmc.info/Reference.

- https://twitter.com/killalaclara/status/1338413672381599744?s=21 – interesting thread (Japanese) talking about different tools.

- MediaPipe https://twitter.com/kzhttkhs/status/1337385123335995392?s=21

Sending Vive Tracker data from VMC to VSeeFace using VMC protocol and combining it with leapmotion & expression tracking… https://twitter.com/suvidriel/status/1337492534168350720?s=21

https://twitter.com/hinzka/status/1338555522237124608?s=21

Conclusions

I wanted to thank many people who have suggested different technologies (including some of the tweets above). My personal goal is *not* to become a VTuber, but instead create lightly animated comics, to be published as Web Stories. Each frame will be a short video clip, with speech bubbles rather than recorded voice. I want to create short animated clips pretty quickly, but with good depth of emotion. I would rather do it all in one tool rather than jump around between tools, but it may actually be quicker – e.g. use a Luppet like tool to capture head and shoulder shots fairly quickly, but use other more advanced tools for more complex scenes.

Another approach that I found interesting was to use VR to pose a character in front of your in virtual space. One such tool is Play AniMaker by @MuRo. The backgrounds are generally static images (although you can load some furniture). The idea is to animate characters quickly in front of a backdrop.