Yesterday I wrote a blog post on a list of low end motion capture tools. Today I thought I would share my opinions and (limited) experiences of the different options. My goal is to create short animated cartoon sequences, not be a VTuber, so my requirements may different from what most people are after.

To help explain the difference, here is a random video that YouTube recommended to me. I am not trying to create a music video, but if you watch it you can see a VRoid character with full body motion (dancing) and facial expressions. I am trying to create edited experiences closer to the 3D scenes in this video, edited sequences to fit into an overall experience.

So let’s run through some of the options listed.

Manually Created Head Turn Animation Clips

Before getting into the tools, I did want to say I have been creating short animation clips manually by adjusting the rotation of the head etc. When compared to a motion captured animation, they look, umm, bad. Robotic might be a better description. If you look at an anime episode, there are many scenes where there is is only so much movement of the head. I believe this is to keep production costs down for less important scenes. Key scenes on the other hand can contain a lot of dynamic movement. Even small movements of the head make a scene feel much more alive and realistic.

My personal experience is once you start using more realistic animation clips (e.g. recorded by someone with a mocap suit), other sequences using simpler animation clips look cheap and nasty.

Animation Clip Libraries

There are a number of libraries of animation clips around. I have had some luck with these libraries, but I would really like each character to have their own personality. That means things like different walk styles. Is the character elegant? Lazy? Despondent? This should show up in how they walk. So animation clip libraries are definitely useful, and I am planning to use them when I can, but they are insufficient to do a good job of establishing a different personality for each of the main characters. I have too many characters and walk styles that I want to do.

Camera Control Facial Expression Tracking

The face tracking software so far for me has been okay, but not really to the quality I am after. Exploring applications such as Vear, Luppet, VSeeFace, and Waidyo, they track my face but lack the depth of emotion I desire. Maybe it would get better with tuning, or maybe I am not a good face actor, but after trying for several hours the results were acceptable, not great. A cartoon character typically exaggerates expressions for maximum impact. So far these tools gave me a limited feeling of emotion. They were however great to get head movement going in support of a facial expression, reducing the robotic feel.

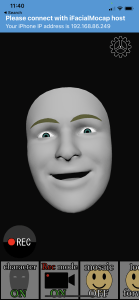

A part of the problem may be the VRoid Studio created characters lack the richness in facial contours for the different mouth positions to really stand out. For example, using iFacialMocap with the default face in the app shows pretty rich facial expressions.

But when I fed the same facial expression into a VRoid Studio character (in this case via Waidyo), they seemed relatively similar to me. I could not make it create angry, crying, etc expressions. The faces lacked the same breadth of emotion.

Also, several of the apps I tried kept having my mouth slightly open. I had to spend time fiddling with thresholds to get the mouth normally closed. So while easy, some tweaking was required.

Also I believe the iOS AR Kit library purposely cannot capture angry expressions very well. As a result, angry and sad looks from face tracking alone is not particularly reliable. (I need to check which ones do not rely on AR Kit and double check if they do a better job at a wider breadth of emotions.)

I do think these tools are great for live performance and I do expect them to get better. I also think the experimental expression recognition mode of VSeeFace is interesting. You can register exaggerated facial expressions to be triggered by your less exaggerated facial expressions. You tell the software “when I make this expression on my face, you make this exaggerated expression on your face.” While still experimental, I think it may be a good solution to the problem.

Leap Motion Hand Tracking

The leap motion camera does a pretty good job of tracking finger movements when the hands are in front of the body. But as soon as I put my hands on my head or by my side, the camera lost sight of the hands. This may be fine for a VTuber streaming while playing a game as the hands would normally be on controllers or keyboards in front of them, but I quickly found too many things I wanted to record that were not possible due to the camera range for the hands.

So this might be useful sometimes, maybe for recording close ups on hands, but I frequently found it insufficient for my desires.

VR Headset and Trackers

Using a VR headset and trackers gives pretty good accuracy and full body motions. It does not track finger positions, but you can use buttons on the hand controller to flip between different hand presets. My main annoyance with these set-ups (I explored a while back now) was the environment set up. It was not quick and easy – it took dedicated effort and space to do the recording. You also have to calibrate the trackers each time you use the equipment again. Also not all of the tools were controllable from inside the VR space, so I had to keep putting the headset on and off.

However it does seem to work pretty well and is a lot cheaper than a full body VR suit (especially since I have a VR headset already). For full body recordings however I think I would need to purchase some additional trackers to put on my waist and legs.

Video Clips (or live video) + Machine Learning Models

There seems to be some promising work going on that takes 2D video and uses machine learning techniques to map back to a 3D model. You train the model on lots of 3D characters in different positions and it learns how to recognize 3D poses from 2D shapes. I mentioned a couple of free tools in the previous post, but they are often experimental – not shrink wrap solutions (yet).

One however I tried was “deepmotion.com”. I was actually pretty impressed. There is a free level of service where you can upload a video file (up to 2 minutes a month) and it will download an animation clip in a FBX file. There are various tiers of paid services. I spent maybe 2 minutes recording a video of myself (with not great lighting), uploaded the MOV file taken by my iPhone, then downloaded the FBX file. It was a pretty slick experience. Note that my video clip was only a few seconds long, so I can create a number of such personalized animation clips for reuse. I just need to plan ahead.

The following video shows body movement controlled by the video, with superimposed random animation clips to control facial expressions (just experimenting to see if they work together). It is a bit jittery, but actually pretty impressive for how little effort it took me. The full body movements certainly add a more realistic depth to me animating individual joints to turn the head sideways etc., and creating a video file is easy and can be done anywhere. No special hardware set up required, I just used my phone camera.

Full Body Mocap Suit and Gloves

I assume mocap suits and gloves work well. They are out of my price range so no personal experience here. The gloves I find particularly tempting to see how much better they track compared to leap motion and video based tools. I will say the video based tools do seem to be progressing in quality fairly rapidly.

Conclusions

For my purposes, I would like to have fairly rich fully body movements. I am fine with them being animation clips as I can reuse the in different episodes of the series I am trying to create.

Full body animation clips from short video clips seems to be making great progress at the moment, and greatly reduces hardware costs – no special hardware is required. I found it pretty easy to create a full body clip with decent results. The deepmotion.com site was pretty good and an easy to use tool – upload a .MOV file and download a FBX files. It worked pretty well for me. I am hoping the free tools continue to improve in quality to avoid the need for special hardware, making the results accessible to a wider audience.

It feels like if you want to be a VTuber, life is easier. To create a good (err, decent?) quality animation, I want a richer set of emotions than most of the VTuber tools provide by default, but I think the situation is getting better. VSeeFace I think has the greatest potential here with its experimental expression recognition efforts. Either that or more work is needed to create better blend shapes for VRoid characters, ones with more 3D depth on the face (laugh wrinkles around the mouth, etc).

I suspect I will need a combination of tools – full body animations as well as close ups on individual parts of the body (facial expressions and hand poses in particular).

Once these tools are all in place, all that remains is for me to learn how to act!

(Okay, maybe I should give up on this project now! Lol!)