There is a lot of buzz going on around AI generative artwork. Rather than art generation, I am personally interested in AI tools to speed up boring (non-creative) workflow tasks. For example, in this post I explore some work that has been done on how to create a 3D background for a film or cartoon animation (or just previsualization before you invest in building real assets) from a 2D image. Why? To get a degree of depth in camera shots (parallax and perspective) while reducing development effort (and hence cost).

Why does this matter?

If you are creating your own computer generated animated cartoon, you get consistency of characters across shots by reusing the same 3D character models. It can take a lot of effort to create a model, but you will reuse the main characters so its worth the investment of effort.

But what about background models? It can be worth the cost if you want to use a location for a range of shots. Using 3D models gives you the freedom of shots from multiple camera angles, as well as casting shadows correctly. But creating 3D models can be expensive, especially if only needed for a few minor shots. How to speed up creating a 3D model from a 2D image? The goal is to be able to move the camera around a bit for your shots with realistic perspective, add camera depth blur, and so on.

Layers

2D animation frequently uses multiple layers of artwork to allow camera movement with a feeling of depth. Background artwork in the far distance is on a separate layer and moves move slowly if tracking a character walking across the screen. You can also blur things in the distance. This new and innovative approach can be seen in this Disney video, Walt Disney’s MultiPlane Camera (Filmed: Feb. 13, 1957). 1957!?! Okay so, maybe it’s not that new!

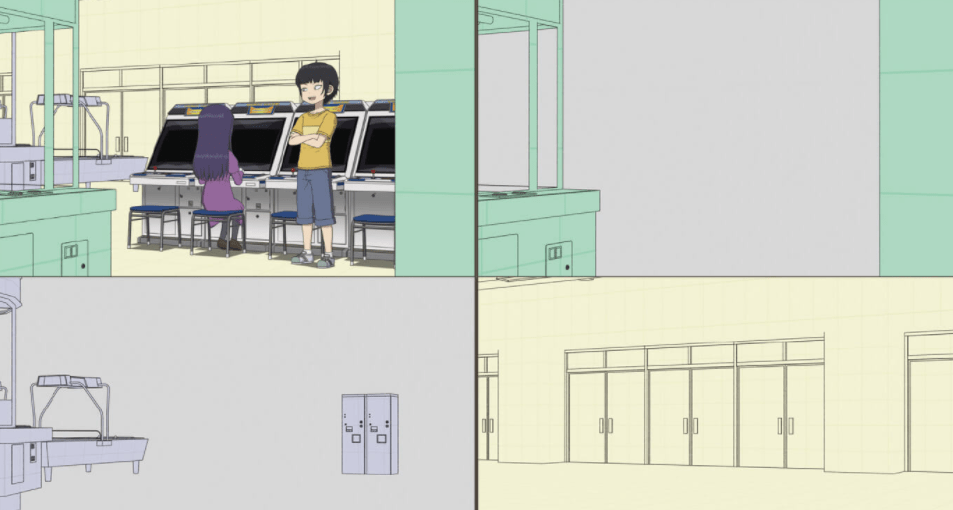

I previously posted 3d Anime Approaches and Thoughts for Myself which included an example of how the TV anime “High Score Girl” was created. They used Adobe AfterEffects to layer in background artwork. The backgrounds were drawn to be just wide enough for the shots needed.

Prompt Muse – a more modern approach

I came across a great video from Prompt Muse that walks through converting a 2D AI generated background image of an alleyway into a 3D model, allowing you to walk down the alley, keeping the artwork perspective correct as the camera moves. The video shows how to use the tools (including Blender) to achieve this, as well as clever AI technology for image touch ups. The video uses AI to generate the background images, but most of what is said will work for any 2D image.

Create a 3D World using AI Images

For example, here is a 2D background image they use in the video.

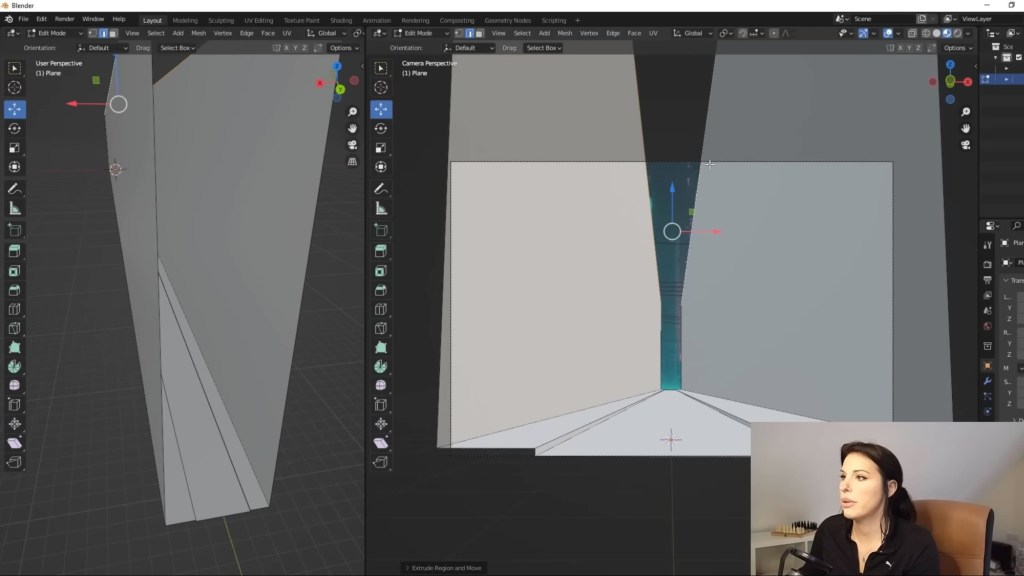

How it works is to create just enough of a 3D model to represent the alleyway, then paint the artwork 2D image onto the surfaces of the model. The video shows step by step commands to map the above 2D artwork onto a 3D model using Blender with the final result looking “right”, without much effort.

Here is the model the video creates for the alleyway. Flat ground with a simple step for the curb, and vertical walls. Pretty simple!

If you look at the artwork, there are lots of protrusions and crevices in the side walls. The shortcut is to leave as much of these details out as possible. It won’t be perfect, but if it looks good enough then it is good enough. If you only need it for some simple shots, you don’t need to get more detailed.

In the Prompt Muse video, some plants and rubbish in the alleyway are erased and then filled in with AI generated artwork, consistent with the surrounding artwork (pretty cool!). You can then add some of the additional objects back as simple planes. It gives a little 3D depth, as long as you don’t look too closely. If you need more depth, use a full 3D model. The point is to do the minimal work required to get the result needed.

Cuebric and Virtual Production

I also came across another product from a creativespark.ai podcast episode, What filmmakers need to know about generative AI. Cuebric from Seyhan Lee is a product for virtual production studios.

Virtual production is a new trend where you build massive LED panels to generate the background of shots, instead of using greenscreens and rotoscoping the background out later. The system knows the position of the camera and so adjusts the depth of the background shot so the perspective looks right as the camera moves around the scene. Very clever! It was used in series such as the Mandalorian and has the benefit of you know what the final effect will be immediately (allowing the actors and directors to understand the final result immediately, instead of waiting for post to do all the green-screening).

The interesting contribution of Cuebric is it speeds up background generation.

Cuebric | Revolutionary tool for virtual production

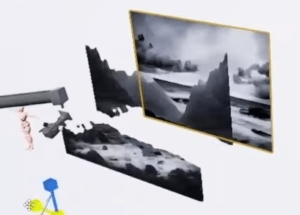

What they use is Stable Diffusion to generate background images (e.g. of a forest) from text prompts, then use depth maps to split the image into a few layers, then use in-filling to fill in the parts extracted from the pulled out layers.

How do you get a depth map from a 2D image? There’s an AI model for that! (For example, here is a video summarizing a few tools available from Blender.) Mind you, I think recent versions of Stable Diffusion can generate an image and depth map at the same time now.

Blender 3.6 Alpha – Depth Map from 2D Images to 3D Objects

The results are not perfect, but it can be useful even if just used for previsualization purposes. Get a feel for an experience before handing it off to a real artist to create properly. Don’t like the current background? Generate another one, add a building, move it, iterate until you are happy with the experience.

There is also of course AI work going for creating 3D models instead of 2D images. That has the potential to remove the need for these sorts of tricks. But with AI, there is now always something new coming along.

Conclusions

It is always worth remembering the goal for film creation is to do just enough to make it look good in the film. You don’t need to worry about what is out of shot – anything not in camera is a waste of effort. If you want some depth to your background, the above videos show a few approaches to create backgrounds with a sense of depth and perspective that are much less effort than creating full 3D models. The approach can be useful if you have access to a great 2D artist rather than a 3D artist, or if you don’t have any access to an artist, using generative AI to generate background images.

I expect to see a lot more of this kind of workflow – advanced AI tools to help creatives work faster. At the end of the day, you still need a human to evaluate the quality of the result and guide the overall creative process. Just like gradient fills were once novel in drawing tools, generative AI I think will become another useful tool in the hands of creators.