Today’s NVIDIA Omniverse livestream featured what, to me, was the most compelling vision of the potential of their platform I have seen yet. Meta placed a big bet on the metaverse being the future of social (Ready Player One anyone?), but it has not taken off (yet). VR is cool, but remains a niche market, and frankly the graphics quality is not that good. NVIDIA Omniverse is not targeted at social — it instead provides a robust development platform for rendering and manipulation of 3D assets. I think there are many real-world applications of the NVIDIA model, where solutions are becoming more cost effective for businesses.

What is NVIDIA Omniverse?

“NVIDIA Omniverse™ is a computing platform that enables individuals and teams to develop Universal Scene Description-based 3D workflows and applications” – https://www.nvidia.com/en-us/omniverse/.

Err, okay, but what does that mean in practice? What problems are they solving? Why should anyone care? There are lots of other 3D modeling tools (Blender for example is a popular free tool), 3D rendering has been commonplace in video games for a long time, so why I am so excited?

Three things:

- Standardization

- Tooling

- Performance

Standardization: Enough of the 3D tools in the market are standardizing around USD that I believe it has a chance of becoming *the* major way to interchange 3D models consistently between platforms. USD came from Pixar (open sourced) and is how they describe scenes and characters in Pixar movies. (I talk more on special USD capabilities below.)

Tooling: The NVIDIA Omniverse tooling is getting pretty useful. They have developed an extensible framework with the ability to compose your own scenes in USD. Its free for individuals, with a paid license for enterprise customers (with some very cool additional tools to manage 3D asset collections and collaborative development). New extensions can be developed by anyone in Python, meaning you don’t have to start from scratch to create useful, working 3D tools. Don’t get me wrong, the tools are still pretty new with rough edges, but it is a real start. (And I don’t touch on the simulation capabilities in this post.)

Performance: Hardware (GPUs) and network bandwidth is getting fast enough to cope with the huge volumes of data that good 3D models typically involve. I started up the free Cesium extension (see the second half of the video above) in Omniverse Compose (formerly Create) and in short order had a 3D model of the world loaded (from Bing’s map data), with an overlay of 3D buildings created from satellite and drone imagery. (I found my own house in it!) I had a Google Maps / Bing Maps like experience, all in USD, zoomed in, downloaded, and rendered in seconds (not minutes). The video above demonstrates showing buildings or factories under development, shown in their correct real-world position.

Use cases

Why is this cool? There are so many possibilities this opens up, similar to the early days of HTML.

First example: With a bit of Python scripting (ChatGPT anyone?), a movie production team could build an extension to tag real world locations for planned shots. This can help the director previsualize what shots will look like, including simulating the sun position at different times of the day. They can quickly mock up virtual sets to superimpose over real world locations to check out the overall look. What will be in shot and what will not? What real world signs need hiding/replacing? The set building team gets a 3D model to understand what to build. “Don’t make that fake building façade quite as tall – I want to see the sunlight coming through the mountains in the background.” All of the involved teams can access the shared model at any time, improving communication between the various creative teams at all stages of the project.

There are lots of other related cool AI-based technologies coming along too. For example, photogrammetry is improving in leaps and bounds. Using a phone, you can take a video walking around and through a physical building and create a 3D model. Again for a movie it could enable visualization and shot planning in advance. Action sequences running through multiple locations can be previsualized before incurring the full expense of a complete production crew on site. Will special lighting be needed? This won’t result in a radical change to the cost of a making a movie, but it can help improve efficiency and reduce risk.

Another obvious use case for 3D modeling is architecture and civic planning. New buildings, roads, bridges, or dams can be visualized in their proposed environment. How will the building fit into the surrounds? How much sunlight will it block to neighbors? What will the impact be on pedestrian traffic?

For what I have described so far, USD is not mandatory. Standardization is what helps make it cost effective. Today there are many different 3D model formats with different capabilities. By standardizing data formats, it makes it easier for tool makers to create custom experiences for specific needs, increasing the value in 3D assets. But read on, I do think USD is significant.

Digital twins

Creating 3D models of new structures can be useful before real-world construction starts, but 3D models of existing buildings can also be useful. Enter the world of digital twins!

Do you have a factory? With a 3D model you can more easily create safety training courses of your factory floor. Teach new staff the safest routes through your factory in easy to understand videos. Create interactive exercises for staff to evacuate in a virtual fire drill starting from 10 random locations.

Or maybe an enterprise wants to provide 3D navigation to a meeting room or desk, helping staff visiting a new location. It’s a useful and practical tool for large organizations, mainly held back by cost effectiveness of creating an app and the 3D model needed by the app. (By the way, did you know you can run Omniverse in the cloud, perhaps allowing it to stream video via a web browser or custom app?)

Another example. You have fire alarm sensors distributed through your building. If one goes off, why not show its location in a 3D model, speeding up response times in the advent of an emergency. Or show a security team which security doors are opening in a building late at night, including which lights have not been turned off. Maybe you want to show the location of fire extinguishers that need routine maintenance, or indoor plants to make sure they are all watered. What about equipment in a factory. Hook up sensors so rather than saying “Equipment item 3423441 is getting overly hot”, you can show its location. 3D models can help humans understand the position of things faster.

Today, each such system would have to build its own 3D world model. That changes with tools like Omniverse. It can draw data from multiple locations to compose a single view. Each data source only has to return the location and state of sensors and the Omniverse app can render display the results. (The Omniverse based app can take the place of a web browser, fetching data from multiple sources owned by different teams.)

So a “digital twin” is where you have a virtual 3D model of real world asset, ideally with live synchronization of data between the real world asset and the virtual one. Why not let security quickly turn on lights at a location before a guard has to investigate a possible incident at night. Drag out a rectangular region and click “lights on”. Or how about linking positional data of a robot arm on a factory floor to a 3D virtual model, allowing examination of what is happening on a factory floor in real time without an employee physically being there. Maybe hook this all up with an AR app on a phone, and show maintenance staff all the locations of equipment that needs maintenance today. Prove the inspection has been performed by getting the maintenance working to tap a button on an app (recording their 3D position) or by taking a photo of the inspection point (with geotagging in the photo). Make it easier to then find historical inspection photos later by showing all nearby locations photos have been taken over time.

You can also use the 3D model for planning future changes. Want to install some new equipment? Add it to your 3D model, then see how it may impact traffic flow on a factory floor. Incorporate into the employee AR app and workers on the floor can experience it firsthand before installation, reporting back potential issues. Again, this becomes possible through the sharing of data, similar to what is possible with the web today. Different sources of data can be combined to assemble the final view.

Is USD the new HTML for 3D worlds?

So far I have not talked about why USD can help with this vision. There are existing 3D model formats so why not just use them? This is because USD has special capabilities that other formats do not. In particular, it natively supports the concept of layering.

Think about some of the types of 3D data I have mentioned so far. Who should own the data? Does it all need to be centralized? That is not how the web works. Web pages (and data behind those pages) is normally maintained by the team that owns that content. USD enables a similar model in 3D worlds through the use of layers. A final scene can be created by selecting which layers of data to include. Load up the current office 3D floor map then layer on lighting information, or electrical, or fire doors, or fire extinguishers, or pot plant locations. Different teams can own their own data keeping it up to date and then making it available to other teams so anyone can combine it to produce the desired end result. The data is not tied to a specific tool.

Further, USD supports “non-destructive overrides”. This is useful for future planning. There can be a shared global model for the current floor plan, then another layer can be created that deletes some office walls and adds new office walls in new positions. USD layers support deleting and modifying data from other layers without the other layer being modified. You can start from the current floor plans and then develop 5 variations if you want. Each variation will only contain the changes from the base model. Will the new office plan impact the position of fire extinguishers or lighting? The floor planning team can publish the new proposed floor plan and share it with other departments to review, each with their own needs in mind. It is both standardization of data formats and well defined ways to combine them that has real power for a wide range of use cases.

Was this accidental in USD? No. Pixar designed this concept in from the start, because they have multiple teams working in parallel on Pixar movies. One team designs locations, another designs characters, another may implement a new fur simulation model, lighting is done by another team, and so on. Having a rich capabilities for combining such information is one capability that sets USD apart. USD was designed for collaborative authoring and maintenance.

Conclusions

Time will tell whether USD becomes the HTML of a 3D browser, but it is off to a good start. It already has wide support from 3D tool vendors, and people (like the video above) are building some pretty impressive applications. The whole concept of allowing teams to maintain the data that falls into their domain is useful in large organizations, even if they are not creating movies like Pixar. Separating the concept of data creation from rendering is another step forwards (again similar to web servers generating HTML and browsers rendering it). As the cost effectiveness improves, I believe so will apps based upon it.

I also like that the visual quality of what can be created is very high. I would hesitate to create a virtual ecommerce store in what I have seen of most VR headsets. It is cute, but not great quality. The renderings supported in Omniverse on the other hand are fantastic quality. You can create amazing quality locations and assets in those locations. It is brand building for a merchant rather than brand damaging. (Okay, okay. High quality can come with big downloads, but things are getting better. I would not be surprised if it was soon possible to host a 3D world the cloud and stream video to the user’s device.)

Will the NVIDIA Omniverse tools become the 3D browser of the future? I don’t know. For example, I believe the NVIDIA Omniverse tools currently rely on NVIDIA GPUs (surprise!). So I think their tool chain will drive a lot of lessons, but there may need to be more standardization allowing the development of multiple 3D browsers (just like there are web browsers) – tailored for different devices.

USD is also like HTML in that it does not capture actions (there is no JavaScript in USD to be executed in the browser in response to user actions). But just like Netscape in the early days helped HTML rise to fame for the open web, I believe the Omniverse set of tools has the potential to help USD become the HTML of future 3D worlds. Why Omniverse and not Unreal Engine, Unity, Blender, Maya, Adobe Substance, Apple AR, etc? They all have USD support, but I think there is an important difference. Many tool vendors can import or export data to/from USD but its not their native format. In Omniverse USD *is* the native format, including native support for all the layering capabilities. So I think it is a better platform for exploring USD use cases.

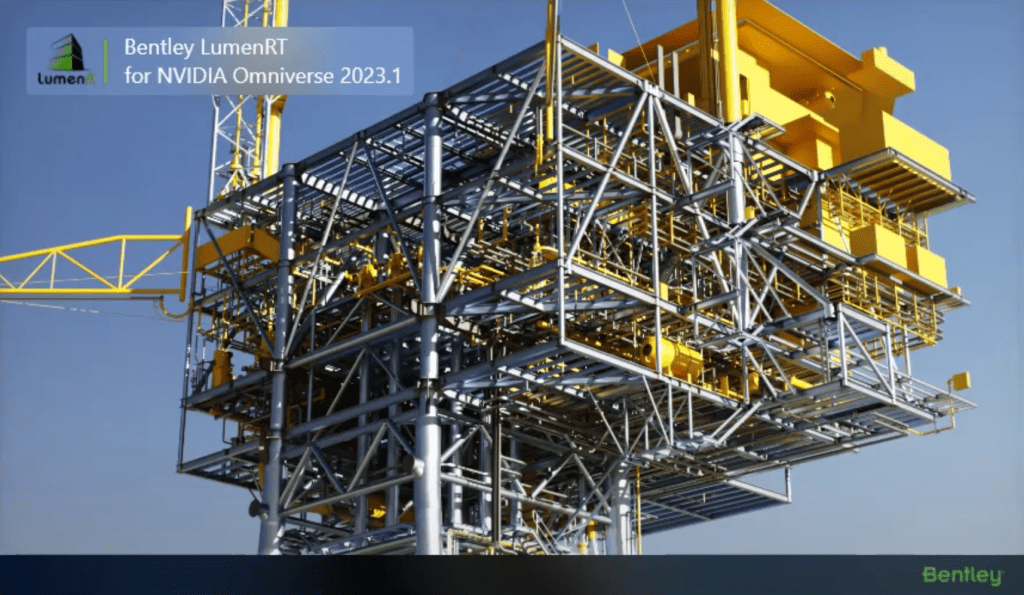

But watch the video yourself, or check out Introducing Bentley’s LumenRT for NVIDIA Omniverse in addition to Cesium Ion. No, I don’t think it’s radical new technology. I do think it’s provides a glimpse of a real “metaverse” that has broad applicability, and its maturing rapidly.

And yes, I am probably a behind considering The Metaverse Standards Forum has a working group of “3D Asset Interoperability using USD and glTF”. But exciting days ahead I suspect – AI is not the only space with some movement happening.