I have been using Unity for a while to create Cartoon Animations, and have recently been exploring NVIDIA Omniverse to see how it has been progressing. From what I can see, while USD was originally developed by Pixar for animated movie production, and NVIDIA have been investing in AI technology such as mapping audio files to facial animation and lip synchronization, Omniverse is putting more effort into topics such as Digital Twins, robotics, and physics engines. But that does not mean I cannot use it. But where are the gaps?

This blog post runs through comparing features in Unity and Omniverse for creating animated videos. They are pretty close. (It also includes a checklist more for myself on challenges I don’t know how to solve yet.)

Scenes

In Unity, scenes hold a hierarchy of game objects, with references to asset files on disk. Prefabs (and prefab variants) contain reusable hierarchies of objects, allowing construction of larger constructions (like buildings) that can be reused across scenes. References to files internally use GUIDs to preserve file references even if files are renamed or moved. Overrides can be defined in a scene to position the prefab or make other local adjustments. Game objects can have components added for holding properties and defining behavioral code (in C++) on objects.

In USD, a stage is built from a hierarchy of prims (primitive elements), which can include and overlay other USD files. There is extensive support for layering and overrides. Prims in files are identified by path names such as /World/Environment/Sky. Prims can have a USD schema type which defines properties to be stored with the prim. Behavioral code is written in Python but is not part of the USD spec. If you save and load a USD file, you save and load all the prims and property values, not behavioral code.

Looks

Both Unity and USD support the concepts of materials, textures, shaders, and 3D skinned meshes. This concepts are somewhat consistent as they are part of the definition of 3D models. Note that Unity shaders cannot all be automatically converted to Omniverse shaders, but the simple projects I have brought across so far using the Omniverse connector for Unity look pretty good. It is the fancier custom shaders for special effects like drops of water on glass that are problematic.

Locations

Location models are typically created from collections of 3D models organized into Unity scenes / USD stages. Unity has Terrain support with “painting” of models such as trees, bushes, flowers, grass, gravel, and so forth onto 3D textured ground (hills and valleys). The scene does not contain models directly, it holds the terrain data that is used by the terrain engine at runtime to position trees and other foliage. Omniverse does not support a terrain engine – it has a tree painter, but painting places model instances directly into the scene, just like any other model.

Unity also has support for Level of Detail (LoD) so distant objects can render a lower quality object in the distance, automatically using a higher quality model when it is close to the camera (for performance). USD has support for variants which can be used to capture the different LoD versions of objects, but specific rendering pipelines need to implement LoD support (Houdini does have support, but Omniverse does not).

The bottom line is I think I can move projects from Unity to Omniverse, but may need to purchase a Unity extension to turn terrain into actual trees instances, which Omniverse can understand.

Characters

I am using VRoid Studio for character creation. Characters are exported as VRM files (VRM is a standard based on GLB). Unity has a UniVRM importer module that adds the required Unity components for hair spring bones, blendshapes for facial expressions, and so on. Unity has the concept of “Humanoid” animations where instead of animating bone joints directly, a series of “muscles” are defined which can only move in ways a human can move (e.g. the knee joint of a human cannot rotate the lower leg sideways – only backwards and forwards). There are also 3rd party extensions for cloth dynamics.

Omniverse supports “animation retargeting” where an animation clip for one character can be mapped onto “skeleton animation”, which is then mapped on to the target character (so it achieves the same thing as humanoid animation). But I have an number of outstanding problems with importing VRoid Studio characters:

- The Omniverse GLB importer skips the root bone when adding skeleton rigging. The root bone is mandatory for some other tools.

- There is no UniVRM equivalent package to add all the missing Omniverse behavioral code to the model (for things such as hair bone physics calculations, or clothing cloth physics support).

- I have not had a chance to investigate blendshapes in Omniverse, how to animate them, etc.

- I have not had a chance to investigate texture tiling in Omniverse, which can be used for effects such as blushing cheeks, or different eye effects (angry, dizzy, blank, and normal).

In Unity, I created my own component with property controls per emotion, so animation just adjusted the “blush level” property, and the component was responsible to the perform that effect on the character. This provides isolation between animation clips and the implementation of the effect. I can change how blush is implemented without breaking the animation clips. In Omniverse, it looks like you can do that same through a combination of properties which get animated, and separate Python scripts for the behavioral implementation.

So extra research I need to do is:

- Define properties for character emotions, so can look to animate the values.

- Write behavioral code for mapping emotion strength settings to the actual character.

- Explore emotions in Omniverse, considering between blendshapes, texture tiling, audio2face facial controls, and audio2gesture.

Animation

Both Unity and Omniverse support animation clips, but outstanding challenges include:

- How to map humanoid animation clips into Omniverse animation clips with retargeting

- The Omniverse sequencer does not support blending between clips

- The Omniverse sequencer does not support “filtering” (avatar masking) like Unity, and I use that a lot (e.g., make bottom half of character obey a “sit” animation clip or pose, and upper half of body follows mocap captured movements)

- I am not sure how to mix procedural animation (e.g., “look at target”) with animation clips

- I don’t have the ability to record live motion data in Omniverse, like the Unity EVMC4U and EasyMotionRecorder packages. (This should be doable.)

- Facial expression and lip sync in Omniverse (Audio2Face) need to be integrated with the Sequencer (I don’t want to animate a face based on individual audio clips – I want to base it on the audio track of a scene in the Sequencer)

- There is also Audio2Gesture in Omniverse – need to work out if can use that.

- Animation clip looping in the Omniverse Sequencer does not support root motion – the character keeps jumping back to the start point per loop. I want to be able to chain say a loop of walking, a transition animation to sit down, then a loop of idle sitting animation.

Some of the concepts are supported in Animation Graphs in Omniverse, just not with the Sequencer. In Unity, you cannot easily mix animation state machines with Timeline animations, and the same seems to be true in Omniverse. This may be because you want to be able to scrub forwards and backwards through a timeline and have the character move to the correct position for that time point. That does not work well with Animation Graphs.

In Unity, I have been using the Sequences package, which lets me jump between multiple shots quickly. You can organize “Sequences” (backed by Timelines) into a hierarchy, where each Timeline has recorder clips to record that segment of the Timeline to a video file. A Timeline can have multiple override tracks (for use with Avatar masks) which I use to start with a full body walk cycle, then possibly override upper body movements, with another override for the facial expressions, and additional overrides for the hand posing. You can collapse a group of tracks to keep in manageable. There is also a Cinemachine package for camera movement controls, such as looking at and following a target, follow lag support for smoother camera movements, camera shake support, and blends between different camera markers making some nice transitions possible (e.g., zoom in on a character and change the background blur at the same time, with a smooth blend between a start and end position).

What I have seen in Omniverse indicates the Sequencer is restricted to one sequence to be loaded at a time, which may mean either I will have to pack more into one sequence, or I will have to jump between files more often, which could be less convenient. There are basic camera controls in Omniverse, but not sure the full Cinemachine functionality is there. But it may I don’t need it. The layering of clips may also be kind of possible, just painful to use. But there is no blending (no blend-in and blend-out durations) between animation movement and pose clips which is a more serious gap (for me). Also the Movie Recorder in Omniverse I am not sure can be controlled from a track in the Sequencer yet or not. Due to physics, you frequently want to start recording one or two seconds into a clip, not from the start, so physics for hair dangles and wind effects have a chance to reach steady state.

Cameras

I have already mentioned camera position animation above, and hinted at settings like depth of field background blurring, but there are more settings. For example, aperture, fstop, lens effects (like lens flare), post processing effects (bloom, depth of field, etc). These have pretty good support in Unity, with most settings being fairly easy to animate.

In Omniverse, it looks like most of the settings are there (not sure about lens flare yet), but some are layer level metadata (part of the USD file metadata). It feels like might have to load and unload USD files to move to the next shots (which is less efficient) because the settings are metadata in the current USD file loaded and not in prims, making them easier to animate in the Sequencer.

- I need to research more on what post processing properties can be animated in the Sequencer

- I need to see if Lens Flare is possible (not a show stopper, but I do use it in some shots for effect)

- I want to explore if the Sequencer can support multiple timelines in memory at the same time.

Physics

I have mentioned some physics already (hair bouncing, dangling cloth) and having dynamic movement as the character moves. There are different implementations strategies in Unity, and I need to explore them in Omniverse. The required support seems to be present, but it still needs some effort to set up. E.g. cloth dynamics for clothes looks nice in Omniverse, but it gets into issues like how to set up colliders on the characters to make sure the cloth does not disappear inside the character.

There is also wind. Unity has “wind zones”, the hair and clothes of a character ideally should respond to the wind, and trees, bushes, grass, flags etc. should move with the wind. Omniverse has “force fields” which look potentially useful, but not sure how to standardize it all across a project for consistent wind strength and direction settings.

Physics can also be useful for a pile of falling boxes, or a bouncing ball. However physics for these operations can be limiting in Unity as they are not controlled by the Timeline, meaning you cannot scrub the Timeline to judge timing of boxes falling due to physics.

- So I need to research into force fields in Omniverse to see how to replace the concept of wind zones in Unity.

- I also want to check any relationship between the sequencer and physics.

Lighting

Unity has a range of lighting types including point lights, spot lights, and area lights. Lights are very important in cinematic content as the lighting is often used to convey mood. Partial lighting can really add drama to a shot. Then there are “god rays” where sunlight shines through clouds onto the ground. And then there are emissive materials (materials that glow).

Omniverse seems to have similar types of lights, so everything seemed possible, but I have not managed to repeat sunbeams (“god rays”) yet (but I do think it is possible).

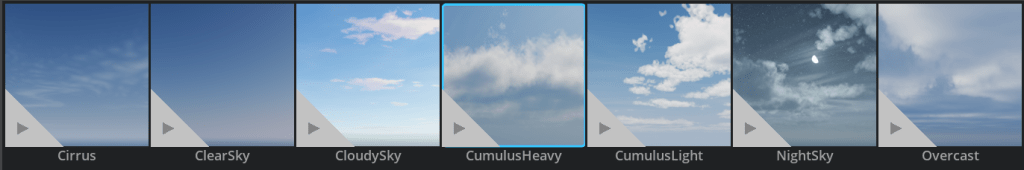

There is a “Sun Study” extension that works with “dynamic sky(s)” where it predicts the sun position based on the time of day.

The sun study extension (when loaded and enabled appropriately).

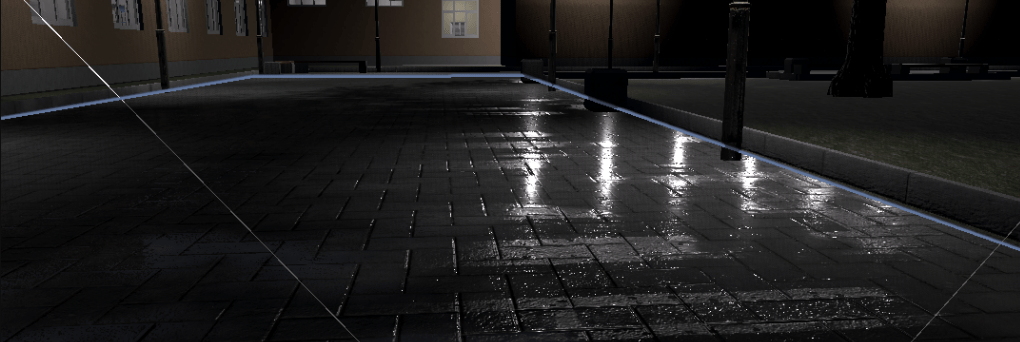

The different render pipelines also behavior quite differently when it comes to shadows, which makes planning shots harder. For example, the real-time renderer responds promptly while composing shots, but the shadows are very different. For example, with real-time rendering:

Flipping to path tracing, with no changes in the scene, the same shot is now over exposed. This can become a big time waster.

[UPDATE, June 1, 2023: Turning off Render Settings > Ray Tracing > Direct Lighting > “Sampled Direct Lighting Mode” helped a lot with the dark shadows above, and turning on “Indirect Diffuse Lighting > Indirect Diffuse GI” with intensity set to 2 made a big difference. Then it’s a matter of cranking down exposure in post processing. My characters may have stronger colors than most props, so maybe they need toning down a bit.]

So I need to work out:

- How to do “god rays” in the sky.

- How to get lighting more consistent between RTX – Real-Time and RTX – Interactive (Path Tracing).

- Can I do areas of local dark fog (I use this to reduce light in the background of some shots, so a corridor is dark in the background, even though its inside with lights on).

Visual effects

Unity (particularly HDRP) has a range of video effects. This includes volumetric clouds and fog which looks pretty good and interacts with the sun (clouds can obscure the sun). There is also a particle systems which can be useful for rain falling from the sky. Using shaders, you can create puddle splashing effects, and rain drops running down windows. Then there is water in swimming pools, lakes, oceans, and rivers (including waterfalls). Closer to fog, there is also smoke and fire.

Omniverse seems to have some degree of support for many of these features, but I need to work out how to do each one. For example, fog is in the render settings window, but finding values that looks good in real scenes will take some more effort. There is also a particle system and shaders, so I believe all the necessary technologies exist, just need to work out how to put it all together. Challenges include tears running down a character’s cheeks, catching the light.

So my checklist of things to investigate with Omniverse includes:

- Volumetric clouds and shadows cast through the clouds onto the ground.

- How to simulate raindrops with the particle system.

- How to mist in a dark scene.

- How to use shaders or similar to show rain drops creating puddles on the ground.

- How to use shaders or similar to show rain drops running down a pane of glass.

- Can tears well up in a character’s eyes, then run down the character’s cheek?

- How to make fog look good.

- How to create smoke (for angry characters) and control it appropriately.

- How to make fire.

Project management

A final note is on how to organize files on disk. In Unity there are FBX files, prefabs, prefab variants (and all the supporting files such as textures and materials). Bringing scenes from Unity across to Omniverse with the current Unity connector duplicates models per instance in the scene hierarchy, instead of reusing them (although they are working to fix this).

In Omniverse there is the Nucleus server for enterprise customers, with capabilities such as Deep Search (find models close to what you want, even without descriptions). For myself, I suspect it will be more a matter of being organized, or seeing if can create a poor man’s search capability. I will probably just start off with directories and subdirectories to group similar assets by hand.

Tasks to do:

- How best to search through collections of models I already have.

- How to safely move a model to its own directory (it may reference other materials or textures using relative path names, meaning moving the model to my “library” may break it).

- Review the Unity connector, to see how good the model reuse support is, when available.

Conclusions

This blog was probably more written for myself – a checklist I need to work through before I can create full episodes in Omniverse. This list of things to work out is still pretty long. The Sequencer and animation support seems to be a major area of work, and there are many other tasks just to learn and work through one by one (such as visual effects, lighting, camera movements, etc.).

Is moving worth the effort? That is a question for another day! It is still interesting to explore.