For my hobbyist animation efforts I started with friends as voice actors. However, not having many friends… I mean, it is hard for my numerous friends to commit to being a voice actor over a long period (and it’s unfortunate if voices of characters change mid series). The obvious answer is to write the script for the full series up front, record it all, then animate it. But I am a computer geek, so instead I have been watching with interest the progress of text-to-speech synthesis with emotions. They are rapidly getting better, but how to put it all together?

Speech Synthesis

Speech synthesis (or text-to-speech) is where you type text and software generates an audio file with a voice saying the text. This is nothing new – Alexa and Google Home are household names these days.

The challenges I face for my hobby animation project include:

- I want a range of voices, unique per character (I have 20+ characters)

- I want voices with a particular age group (I need a bunch of school kids as well as adults)

- I want voices with EMOTION – it’s hard to create listener empathy when the voice is flat and dispassionate

To expand on emotions, consider Alexa. The voice is always calm and consistent. That is not what you want when telling a story and are trying to get an emotional response from your audience. Nothing like a flat, unemotional voice to kill a mood.

SSML

Several of the big names (Amazon, Google, etc) support Speech Synthesis Markup Language (SSML). This markup gives you some control over how the text will be spoken with controls over rate of speed, volume, and pitch.

- Amazon: https://docs.aws.amazon.com/polly/latest/dg/supportedtags.html

- Google: https://cloud.google.com/text-to-speech/docs/ssml

The problem is they don’t really deliver emotion in voices. You can fudge it a bit by tweaking pitch and emphasis, but not enough.

Modern voice synthesis

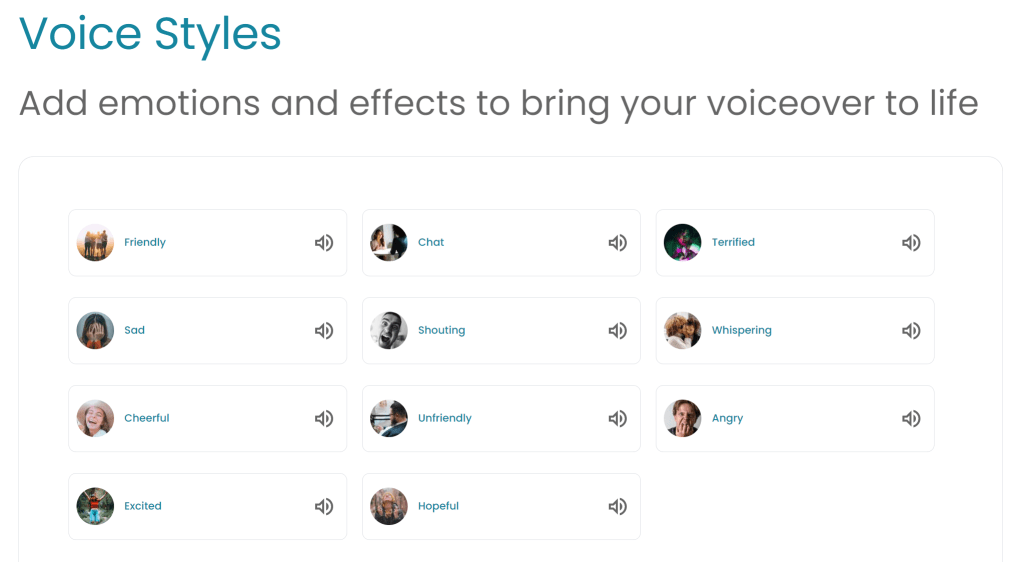

The good news is there are a range of vendors that now demonstrate voices with a range of emotions. For example:

https://www.naturalreaders.com/commercial.html

No, it will not be as good as a human actor, but in my case I am aiming for acceptable animation with low effort. A degree of emotion into the voice is still a big step up.

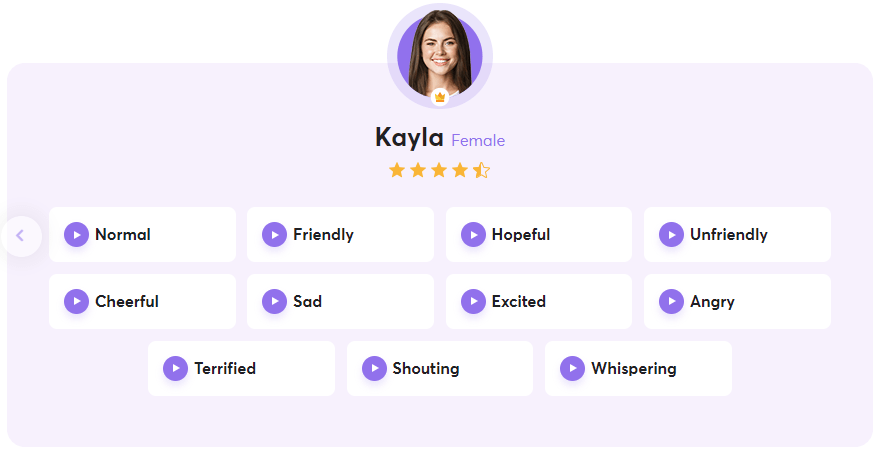

But beware before you purchase – not all of the voices on the above sites support all emotions. And yes, I did say purchase. This is cutting edge technology, so while some sites may be priced low to get adoption, others are priced high because the total market size is not that big. Hopefully it will come down over time. Otherwise, write the entire series script in advance so you can record it all within the first month of your subscription! (Hang on, that sounds familiar…)

ElevenLabs

One service I am exploring that does not have explicit emotional control, but delivers rich voices ElevenLabs (https://elevenlabs.io). I find it rather cool because it generates random voices for you based on a few inputs. This actually suits me perfectly (although you have to get a bigger subscription for more voices). Also, you can can some degree of vocal emotions with the liberal addition of exclamation marks and appropriately placed commas.

Since I have a large cast planned, creating a range of unique voices for different age groups is ideal. And the voices are pretty good quality, with a degree of richness of expression that I find quite impressive.

ElevenLabs animated voice test – a quick test using ElevenLabs for speech synthesis.

Lip-sync

When I use speech bubbles or captions on videos (no voice track, just music), I wrote some code to make the lips move in approximation of speech. It worked pretty well and was only a few hundred lines of code. But as soon as you add real audio voice tracks, you need to get timing right or it looks like an old-time dubbed movie.

So, once you have a voice track, how to use it on an avatar? How to make the mouth of characters move?

Ideally, the voice synthesis process would generate an audio file and a file of timed mouth positions. But I have not seen anyone do this yet. So instead, you will need software that can guess the mouth positions from analyzing audio files. This is built into a number of cartoon animation packages (like Adobe Character Animator and the Reallusion Cartoon Maker). The good news is there is open source software that I used on the above video, Rhubarb Lip Sync. It outputs JSON with start and end times for each mouth position, ideal for my needs.

Now after a bit of C# scripting in Unity, I can take a MP3 vocal track file spliced up in Unity Timelines (to align the spoken text with actions), convert to WAV files (needed by Rhubarb), generate a JSON file with mouth movements, then create clips on a custom track which controls the mouth positions of a character. The above video used this approach.

Wrapping up

This blog post is not intended to list all available or best software solving all of the problems with computer generated speech for animated characters. My personal interest is creating animations with relatively low effort, compared to creating Pixar quality level animations. I don’t have that sort of time available. The good news that I wanted to share is that speech synthesis is maturing rapidly, and text-to-speech with emotions is already appearing, with the ability to have a range of voices for different characters.

Once more voice services exist with emotional support at a hobbyist affordable price, I hope some will also start to generate timing data for visemes to avoid the need for audio analysis required for lip-sync, to improve the quality and speed up the workflow.